Description:

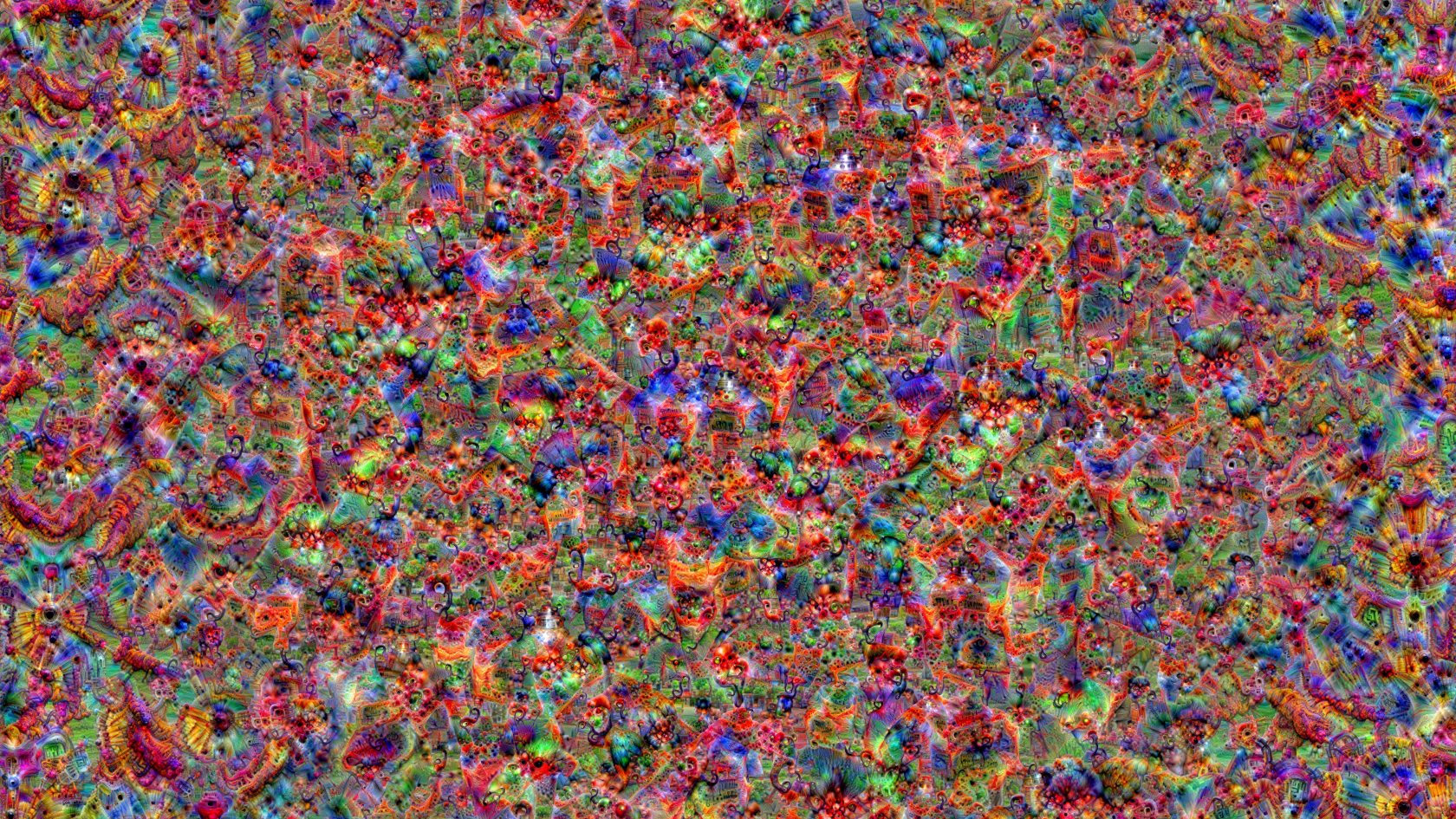

You are placed in a cube that represents being inside a neural net. The images on the walls are obtained from a neural network through feature visualization. This technique consists of iteratively tweaking neural network inputs to cause certain behavior. In AI research, feature visualization is used for interpreting neural networks decisions. The class probabilities come from the neural net named InceptionV1. This is a convolutional neural network, trained on Imagenet — a dataset of 14 million annotated images of everyday things. Normally it is used to classify images in one of 1000 classes. It turns out that the networks created for image classification have a surprising capacity for generating images, and the results are quite interesting visually. By reversing the original purpose of the network, Aizek (Michael Anoshenko) and Alg (Aleksandr Groznykh) take a look “inside the machine’s mind”. Their research is focused on understanding how a neural network forms concept of the world. By using feature visualization, one can obtain the “perfect version” of any concept, or its “AI ID”.